Computer Vision

Extracting meaning and building representations of visual objects and events in the world.

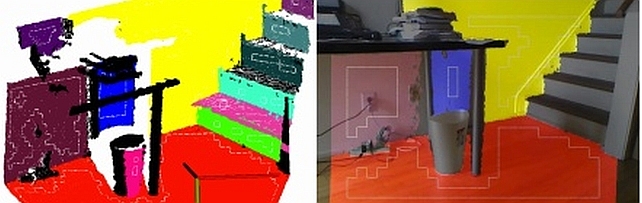

Our main research themes cover the areas of deep learning and artificial intelligence for object and action detection, classification and scene understanding, robotic vision and object manipulation, 3D processing and computational geometry, as well as simulation of physical systems to enhance machine learning systems.

Quick Links

-

Researchers

Anoop

Cherian

Tim K.

Marks

Michael J.

Jones

Chiori

Hori

Suhas

Lohit

Jonathan

Le Roux

Hassan

Mansour

Kuan-Chuan

Peng

Moitreya

Chatterjee

Matthew

Brand

Siddarth

Jain

Pedro

Miraldo

Radu

Corcodel

Petros T.

Boufounos

Daniel N.

Nikovski

Ye

Wang

Anthony

Vetro

Gordon

Wichern

William S.

Yerazunis

Toshiaki

Koike-Akino

Dehong

Liu

Arvind

Raghunathan

Abraham P.

Vinod

Pu

(Perry)

Wang

Avishai

Weiss

Stefano

Di Cairano

Lalit

Manam

Yoshiki

Masuyama

Kaen

Kogashi

Yanting

Ma

Philip V.

Orlik

Joshua

Rapp

Alexander

Schperberg

Huifang

Sun

Yebin

Wang

Kenji

Inomata

Jin

Kato

Jing

Liu

Kei

Suzuki

-

Awards

-

AWARD Best Paper - Honorable Mention Award at WACV 2021 Date: January 6, 2021

Awarded to: Rushil Anirudh, Suhas Lohit, Pavan Turaga

MERL Contact: Suhas Lohit

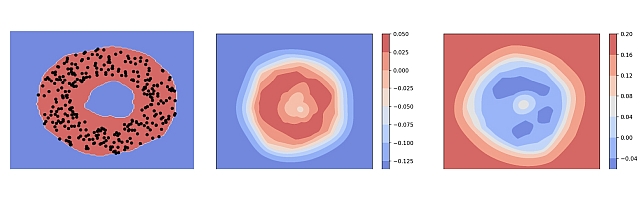

Research Areas: Computational Sensing, Computer Vision, Machine LearningBrief- A team of researchers from Mitsubishi Electric Research Laboratories (MERL), Lawrence Livermore National Laboratory (LLNL) and Arizona State University (ASU) received the Best Paper Honorable Mention Award at WACV 2021 for their paper "Generative Patch Priors for Practical Compressive Image Recovery".

The paper proposes a novel model of natural images as a composition of small patches which are obtained from a deep generative network. This is unlike prior approaches where the networks attempt to model image-level distributions and are unable to generalize outside training distributions. The key idea in this paper is that learning patch-level statistics is far easier. As the authors demonstrate, this model can then be used to efficiently solve challenging inverse problems in imaging such as compressive image recovery and inpainting even from very few measurements for diverse natural scenes.

- A team of researchers from Mitsubishi Electric Research Laboratories (MERL), Lawrence Livermore National Laboratory (LLNL) and Arizona State University (ASU) received the Best Paper Honorable Mention Award at WACV 2021 for their paper "Generative Patch Priors for Practical Compressive Image Recovery".

-

AWARD MERL Researchers win Best Paper Award at ICCV 2019 Workshop on Statistical Deep Learning in Computer Vision Date: October 27, 2019

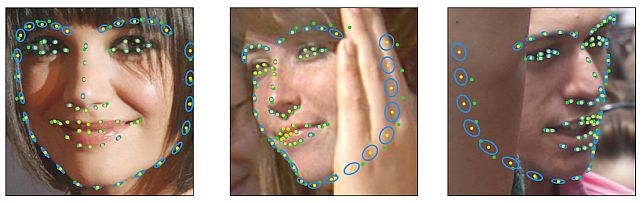

Awarded to: Abhinav Kumar, Tim K. Marks, Wenxuan Mou, Chen Feng, Xiaoming Liu

MERL Contact: Tim K. Marks

Research Areas: Artificial Intelligence, Computer Vision, Machine LearningBrief- MERL researcher Tim Marks, former MERL interns Abhinav Kumar and Wenxuan Mou, and MERL consultants Professor Chen Feng (NYU) and Professor Xiaoming Liu (MSU) received the Best Oral Paper Award at the IEEE/CVF International Conference on Computer Vision (ICCV) 2019 Workshop on Statistical Deep Learning in Computer Vision (SDL-CV) held in Seoul, Korea. Their paper, entitled "UGLLI Face Alignment: Estimating Uncertainty with Gaussian Log-Likelihood Loss," describes a method which, given an image of a face, estimates not only the locations of facial landmarks but also the uncertainty of each landmark location estimate.

-

AWARD CVPR 2011 Longuet-Higgins Prize Date: June 25, 2011

Awarded to: Paul A. Viola and Michael J. Jones

Awarded for: "Rapid Object Detection using a Boosted Cascade of Simple Features"

Awarded by: Conference on Computer Vision and Pattern Recognition (CVPR)

MERL Contact: Michael J. Jones

Research Area: Machine LearningBrief- Paper from 10 years ago with the largest impact on the field: "Rapid Object Detection using a Boosted Cascade of Simple Features", originally published at Conference on Computer Vision and Pattern Recognition (CVPR 2001).

See All Awards for MERL -

-

News & Events

-

EVENT MERL Contributes to ICASSP 2026 Date: Monday, May 4, 2026 - , May 8, 2026

Location: Barcelona, Spain

MERL Contacts: Wael H. Ali; Petros T. Boufounos; Chiori Hori; Jonathan Le Roux; Yanting Ma; Hassan Mansour; Yoshiki Masuyama; Joshua Rapp; Anthony Vetro; Pu (Perry) Wang; Gordon Wichern

Research Areas: Artificial Intelligence, Computational Sensing, Computer Vision, Machine Learning, Optimization, Signal Processing, Speech & AudioBrief- MERL has made numerous contributions to both the organization and technical program of ICASSP 2026, which is being held in Barcelona, Spain from May 4-8, 2026.

Sponsorship

MERL is proud to be a Silver Patron of the conference and will participate in the student job fair on Thursday, May 7. Please join this session to learn more about employment opportunities at MERL, including openings for research scientists, post-docs, and interns. MERL Distinguished Research Scientists Petros T. Boufounos and Jonathan Le Roux will also present a spotlight session on MERL’s research in signal processing on Tuesday, May 5 at 13:05. Finally, MERL will sponsor a photo booth on Thursday, May 7 and Friday, May 8, where ICASSP participants can take professional photos with friends and colleagues, which will be emailed to them.

MERL is also pleased to be the sponsor of two IEEE Awards that will be presented at the conference. We congratulate Prof. Nasir Ahmed, the recipient of the 2026 IEEE Fourier Award for Signal Processing, and Dr. Alex Acero, the recipient of the 2026 IEEE James L. Flanagan Speech and Audio Processing Award.

Technical Program

MERL is presenting 8 papers in the main conference on a wide range of topics including source separation, spatial audio, neural audio codecs, radar-based pose estimation, camera-based airflow sensing, radar array processing, and optimization. Another paper on neural speech codecs will be presented at the Low-Resource Audio Codec (LRAC) Satellite Workshop. MERL researchers will also present two articles published in IEEE Open Journal of Signal Processing (OJSP) on music source separation and head-related transfer function (HRTF) modeling. Finally, Speech and Audio Team members Yoshiki Masuyama and Jonathan Le Roux co-organized a Special Session on Neural Spatial Audio Processing, which will feature six oral presentations.

About ICASSP

ICASSP is the flagship conference of the IEEE Signal Processing Society, and the world's largest and most comprehensive technical conference focused on the research advances and latest technological development in signal and information processing. The event attracts more than 4000 participants each year.

- MERL has made numerous contributions to both the organization and technical program of ICASSP 2026, which is being held in Barcelona, Spain from May 4-8, 2026.

-

TALK [MERL Seminar Series 2026] Jialong Wu presents talk titled World Models and Human-like Reasoning Date & Time: Wednesday, March 25, 2026; 11:00 AM

Speaker: Jialong Wu, Tsinghua University

MERL Host: Anoop Cherian

Research Areas: Artificial Intelligence, Computer Vision, Machine LearningAbstract This talk introduces the background and key findings of our recent work, "Visual Generation Unlocks Human-Like Reasoning through Multimodal World Models," which answers the question of when and how visual generation enabled by unified multimodal models (UMMs) benefits reasoning. We take a world model perspective, inspired by human cognition. Specifically, humans construct mental models of the world, representing information and knowledge through two complementary channels—verbal and visual—to support reasoning, planning, and decision-making. In contrast, recent advances in large language models (LLMs) and vision–language models (VLMs) largely rely on verbal chain-of-thought reasoning, leveraging primarily symbolic and linguistic world knowledge. Unified multimodal models (UMMs) open a new paradigm by using visual generation for visual world modeling, advancing more human-like reasoning on tasks grounded in the physical world. In this work, we formalize the atomic capabilities of world models and world model-based chain-of-thought reasoning. We highlight the richer informativeness and complementary prior knowledge afforded by visual world modeling, leading to our visual superiority hypothesis for tasks grounded in the physical world. We identify and design tasks that necessitate interleaved visual-verbal CoT reasoning, constructing a new evaluation suite, VisWorld-Eval. Through controlled experiments on BAGEL, we show that interleaved CoT significantly outperforms purely verbal CoT on tasks that favor visual world modeling, strongly supporting our insights.

This talk introduces the background and key findings of our recent work, "Visual Generation Unlocks Human-Like Reasoning through Multimodal World Models," which answers the question of when and how visual generation enabled by unified multimodal models (UMMs) benefits reasoning. We take a world model perspective, inspired by human cognition. Specifically, humans construct mental models of the world, representing information and knowledge through two complementary channels—verbal and visual—to support reasoning, planning, and decision-making. In contrast, recent advances in large language models (LLMs) and vision–language models (VLMs) largely rely on verbal chain-of-thought reasoning, leveraging primarily symbolic and linguistic world knowledge. Unified multimodal models (UMMs) open a new paradigm by using visual generation for visual world modeling, advancing more human-like reasoning on tasks grounded in the physical world. In this work, we formalize the atomic capabilities of world models and world model-based chain-of-thought reasoning. We highlight the richer informativeness and complementary prior knowledge afforded by visual world modeling, leading to our visual superiority hypothesis for tasks grounded in the physical world. We identify and design tasks that necessitate interleaved visual-verbal CoT reasoning, constructing a new evaluation suite, VisWorld-Eval. Through controlled experiments on BAGEL, we show that interleaved CoT significantly outperforms purely verbal CoT on tasks that favor visual world modeling, strongly supporting our insights.

See All News & Events for Computer Vision -

-

Research Highlights

-

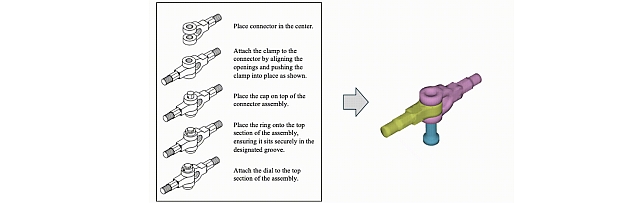

AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects -

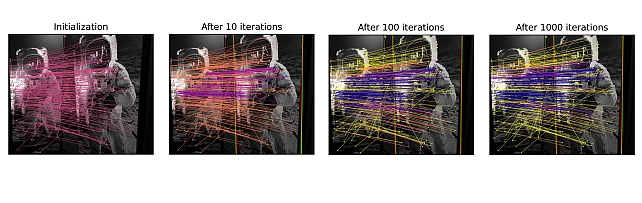

SLAM-MER: Revisiting Monocular SLAM with Spatio-Temporal Scene Modeling -

Parallel Rigidity Matters for Bundle Adjustment -

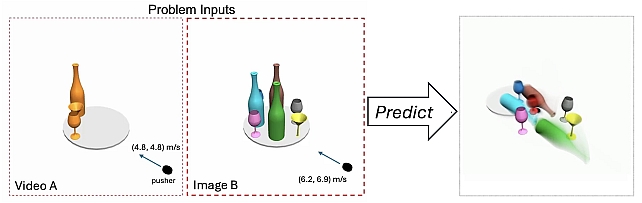

LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines -

SAC-GNC: SAmple Consensus for adaptive Graduated Non-Convexity -

PS-NeuS: A Probability-guided Sampler for Neural Implicit Surface Rendering -

TI2V-Zero: Zero-Shot Image Conditioning for Text-to-Video Diffusion Models -

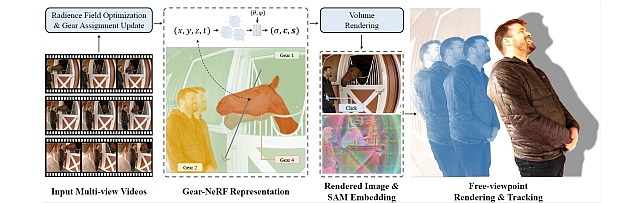

Gear-NeRF: Free-Viewpoint Rendering and Tracking with Motion-Aware Spatio-Temporal Sampling -

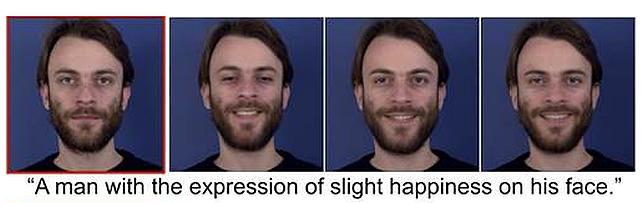

Steered Diffusion -

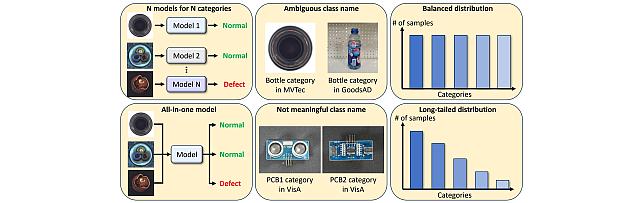

Robust Machine Learning -

Video Anomaly Detection -

MERL Shopping Dataset -

Point-Plane SLAM

-

-

Internships

-

CV0075: Internship - Multimodal Embodied AI

-

CA0283: Internship - Active SLAM for Aerial Robots

-

CA0221: Internship - Robust Estimation for Computer Vision

See All Internships for Computer Vision -

-

Openings

See All Openings at MERL -

Recent Publications

- , "LASER: Layer-wise Scale Alignment for Training-Free Streaming 4D Reconstruction", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), May 2026.BibTeX TR2026-055 PDF

- @inproceedings{Ding2026may,

- author = {Ding, Tianye and Xie, Yiming and Liang, Yiqing and Chatterjee, Moitreya and Miraldo, Pedro and Jiang, Huaizu},

- title = {{LASER: Layer-wise Scale Alignment for Training-Free Streaming 4D Reconstruction}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-055}

- }

- , "Parallel Rigidity Matters for Bundle Adjustment", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), May 2026.BibTeX TR2026-053 PDF Video Presentation

- @inproceedings{Lalit2026may,

- author = {{Lalit, Manam and Govindu, Venu}},

- title = {{Parallel Rigidity Matters for Bundle Adjustment}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-053}

- }

- , "Revisiting Monocular SLAM with Spatio-Temporal Scene Modeling", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), May 2026.BibTeX TR2026-056 PDF Video

- @inproceedings{Piedade2026may,

- author = {Piedade, Valter and Manam, Lalit and Yamazaki, Masashi and Miraldo, Pedro},

- title = {{Revisiting Monocular SLAM with Spatio-Temporal Scene Modeling}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-056}

- }

- , "LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines", International Conference on Artificial Intelligence and Statistics (AISTATS), May 2026.BibTeX TR2026-052 PDF

- @inproceedings{Cherian2026may,

- author = {Cherian, Anoop and Corcodel, Radu and Jain, Siddarth and Romeres, Diego},

- title = {{LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines}},

- booktitle = {International Conference on Artificial Intelligence and Statistics (AISTATS)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-052}

- }

- , "Recovering Pulse Waves from Video Using Deep Unrolling and Deep Equilibrium Models", IEEE Transactions on Image Processing, DOI: 10.1109/TIP.2026.3671653, Vol. 35, pp. 2755-2770, March 2026.BibTeX TR2026-031 PDF

- @article{Shenoy2026mar,

- author = {Shenoy, Vineet and Lohit, Suhas and Mansour, Hassan and Chellappa, Rama and Marks, Tim K.},

- title = {{Recovering Pulse Waves from Video Using Deep Unrolling and Deep Equilibrium Models}},

- journal = {IEEE Transactions on Image Processing},

- year = 2026,

- volume = 35,

- pages = {2755--2770},

- month = mar,

- doi = {10.1109/TIP.2026.3671653},

- issn = {1941-0042},

- url = {https://www.merl.com/publications/TR2026-031}

- }

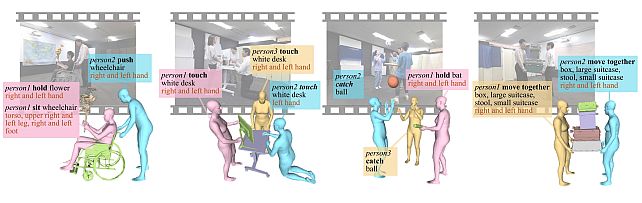

- , "MMHOI: Modeling Complex 3D Multi-Human Multi-Object Interactions", IEEE Winter Conference on Applications of Computer Vision (WACV), March 2026, pp. 1512-1521.BibTeX TR2026-029 PDF Video Data

- @inproceedings{Kogashi2026mar,

- author = {Kogashi, Kaen and Cherian, Anoop and Kuo, Meng-Yu Jennifer},

- title = {{MMHOI: Modeling Complex 3D Multi-Human Multi-Object Interactions}},

- booktitle = {IEEE Winter Conference on Applications of Computer Vision (WACV)},

- year = 2026,

- pages = {1512--1521},

- month = mar,

- url = {https://www.merl.com/publications/TR2026-029}

- }

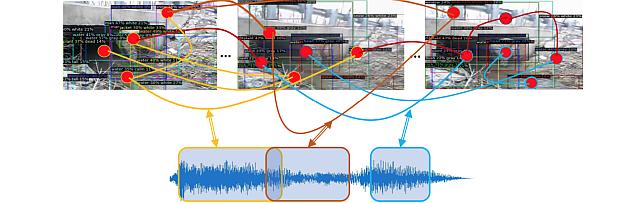

- , "Leveraging Multimodal LLM Descriptions of Activity for Explainable Semi-Supervised Video Anomaly Detection", Transactions on Machine Learning Research, February 2026.BibTeX TR2026-027 PDF

- @article{Mumcu2026feb2,

- author = {Mumcu, Furkan and Jones, Michael J. and Yilmaz, Yasin and Cherian, Anoop},

- title = {{Leveraging Multimodal LLM Descriptions of Activity for Explainable Semi-Supervised Video Anomaly Detection}},

- journal = {Transactions on Machine Learning Research},

- year = 2026,

- month = feb,

- url = {https://www.merl.com/publications/TR2026-027}

- }

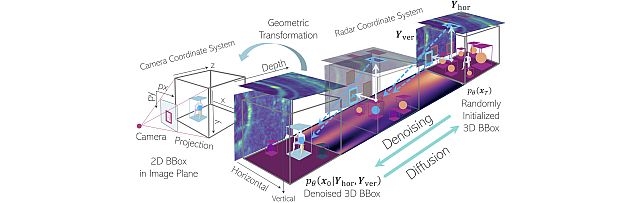

- , "Indoor Multi-View Radar Object Detection via 3D Bounding Box Diffusion", AAAI Conference on Artificial Intelligence, DOI: 10.1609/aaai.v40i22.38939, January 2026, vol. 40, pp. 18710-18718.BibTeX TR2026-019 PDF Software

- @inproceedings{Yataka2026jan,

- author = {Yataka, Ryoma and Wang, Pu and Boufounos, Petros T. and Takahashi, Ryuhei},

- title = {IIndoor Multi-View Radar Object Detection via 3D Bounding Box Diffusion},

- booktitle = {AAAI Conference on Artificial Intelligence},

- year = 2026,

- volume = 40,

- number = 22,

- pages = {18710--18718},

- month = jan,

- doi = {10.1609/aaai.v40i22.38939},

- url = {https://www.merl.com/publications/TR2026-019}

- }

- , "LASER: Layer-wise Scale Alignment for Training-Free Streaming 4D Reconstruction", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), May 2026.

-

Videos

-

Software & Data Downloads

-

MMHOI Dataset: Modeling Complex 3D Multi-Human Multi-Object Interactions -

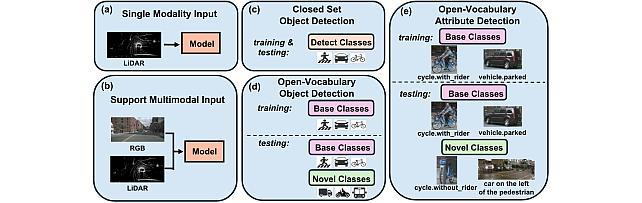

Open Vocabulary Attribute Detection Dataset -

multi-view Radar object dEtection with 3D bounding boX diffusiOn -

Long-Tailed Online Anomaly Detection dataset -

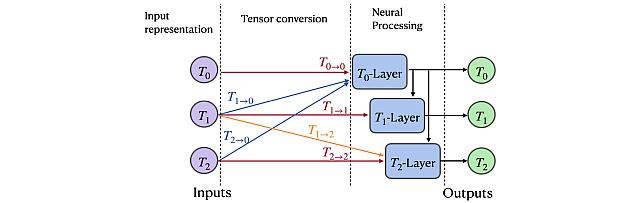

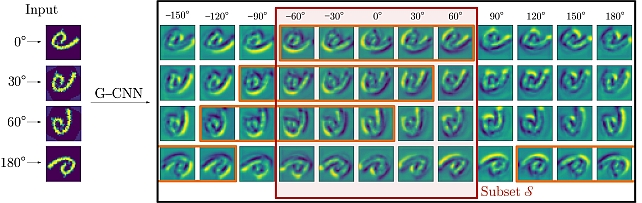

Group Representation Networks -

ComplexVAD Dataset -

Zero-Shot Image Conditioning for Text-to-Video Diffusion Models -

Gear Extensions of Neural Radiance Fields -

Long-Tailed Anomaly Detection Dataset -

Pixel-Grounded Prototypical Part Networks -

Steered Diffusion -

BAyesian Network for adaptive SAmple Consensus -

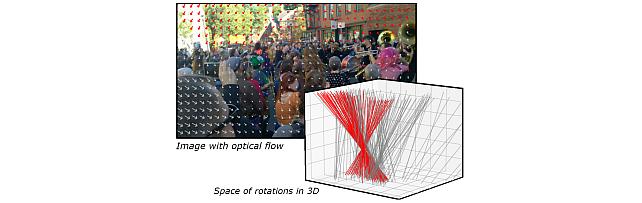

Robust Frame-to-Frame Camera Rotation Estimation in Crowded Scenes

-

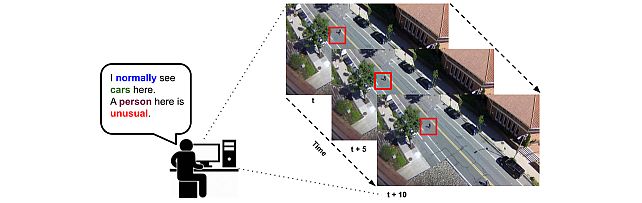

Explainable Video Anomaly Localization -

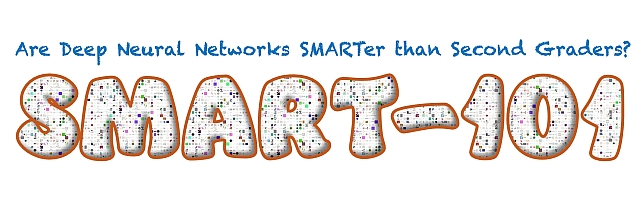

Simple Multimodal Algorithmic Reasoning Task Dataset -

Partial Group Convolutional Neural Networks -

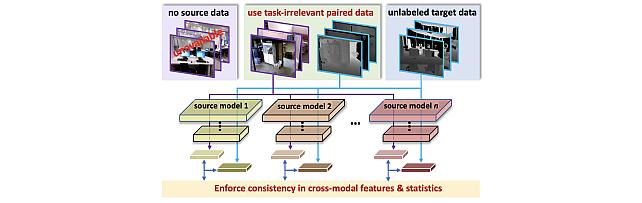

SOurce-free Cross-modal KnowledgE Transfer -

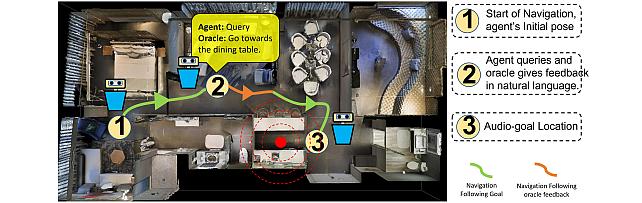

Audio-Visual-Language Embodied Navigation in 3D Environments -

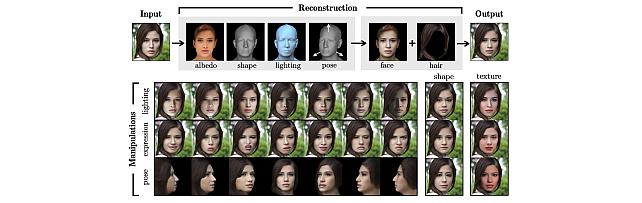

3D MOrphable STyleGAN -

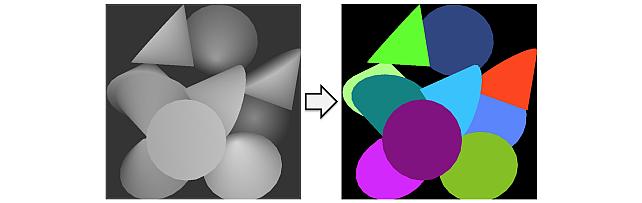

Instance Segmentation GAN -

Audio Visual Scene-Graph Segmentor -

Generalized One-class Discriminative Subspaces -

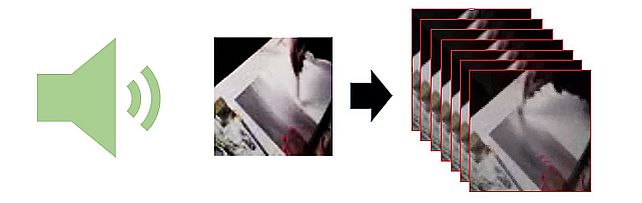

Generating Visual Dynamics from Sound and Context -

Adversarially-Contrastive Optimal Transport -

MotionNet -

Street Scene Dataset -

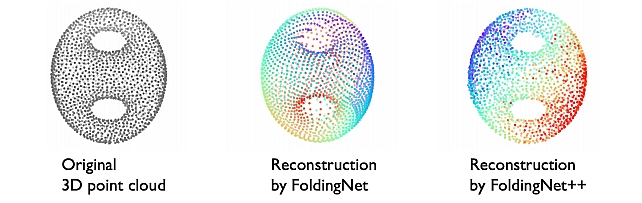

FoldingNet++ -

Landmarks’ Location, Uncertainty, and Visibility Likelihood -

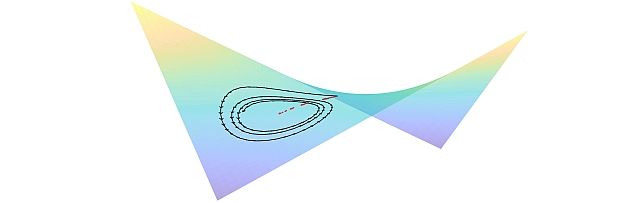

Gradient-based Nikaido-Isoda -

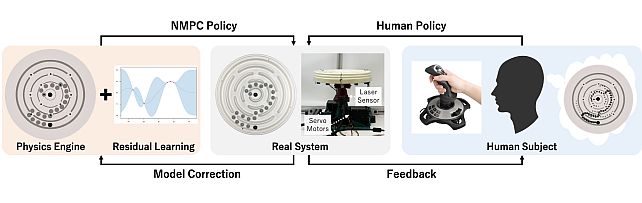

Circular Maze Environment -

Discriminative Subspace Pooling -

Kernel Correlation Network -

Fast Resampling on Point Clouds via Graphs -

FoldingNet -

MERL Shopping Dataset -

Joint Geodesic Upsampling -

Plane Extraction using Agglomerative Clustering -

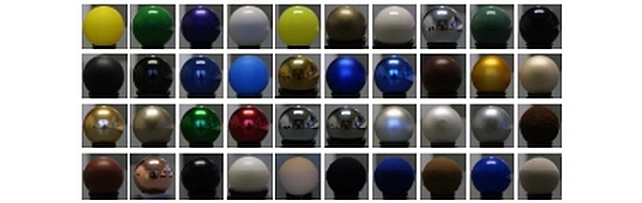

MERL BRDF Database -

Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines -

Physics-Aware Assembly of Complex Industrial Objects

-