AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects

A synthetic dataset of 2,789 industrial objects and an approach for multimodal instruction manual understanding.

MERL Researchers: Anoop Cherian, Tim K. Marks, Suhas Lohit, Moitreya Chatterjee.

Joint work with:

Danrui Li (Rutgers University),

Jiahao Zhang (Australian National University),

Bernhard Egger (Friedrich-Alexander-Universität Erlangen-Nürnberg)

Assembling objects from parts requires understanding multimodal instructions, linking them to 3D components, and predicting physically plausible 6-DoF motions for each assembly step. Existing datasets focus on simplified scenarios, overlooking shape complexities and assembly trajectories in industrial assemblies.

We introduce AssemblyBench, a synthetic dataset of 2,789 industrial objects with multimodal instruction manuals, corresponding 3D part models, and part assembly trajectories.

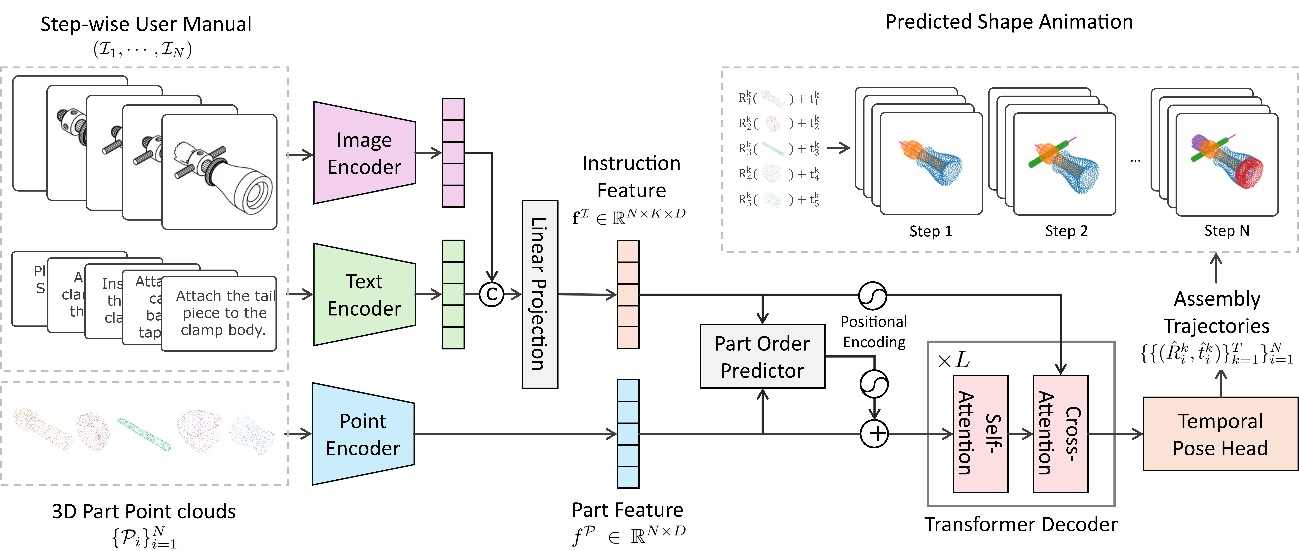

We also propose a transformer-based model, AssemblyDyno, which uses the instructional manual and the 3D shape of each part to jointly predict assembly order and part assembly trajectories. AssemblyDyno outperforms prior works in both assembly pose estimation and trajectory feasibility, where the latter is evaluated by our physics-based simulations.

Problem Setup: Assembly of Complex Industrial Objects

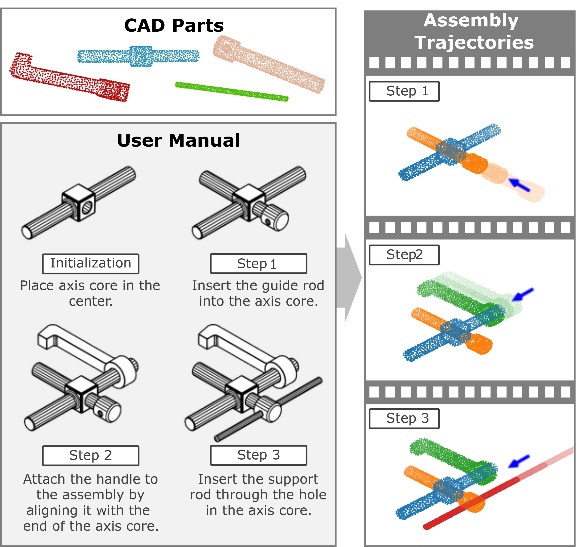

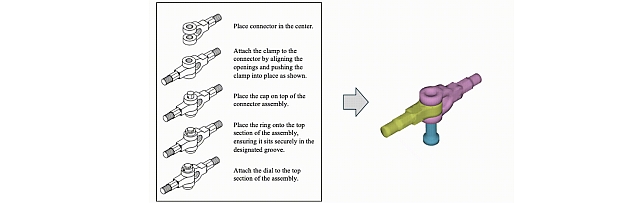

In this work, we consider the problem of assembling complex industrial objects that have parts of varied shapes, sizes, and need intricate maneuvers for assembly. Given a stepwise manual with diagrams and text as shown on the left, we aim to assemble the corresponding set of 3D parts, which are represented as 3D point clouds, in a physics engine-based virtual environment, outputting its stepwise assembly trajectories, which can be rendered into 4D animations as shown on the right.

AssemblyBench: Synthetic Industrial Assembly Dataset

Existing datasets focus on simplified assembly scenarios, overlooking shape complexities and assembly trajectories in industrial assemblies. AssemblyBench resolves this limitation by introducing a synthetic dataset of 2,789 industrial objects with automatically generated multimodal instruction manuals, corresponding 3D part models, and part assembly trajectories.

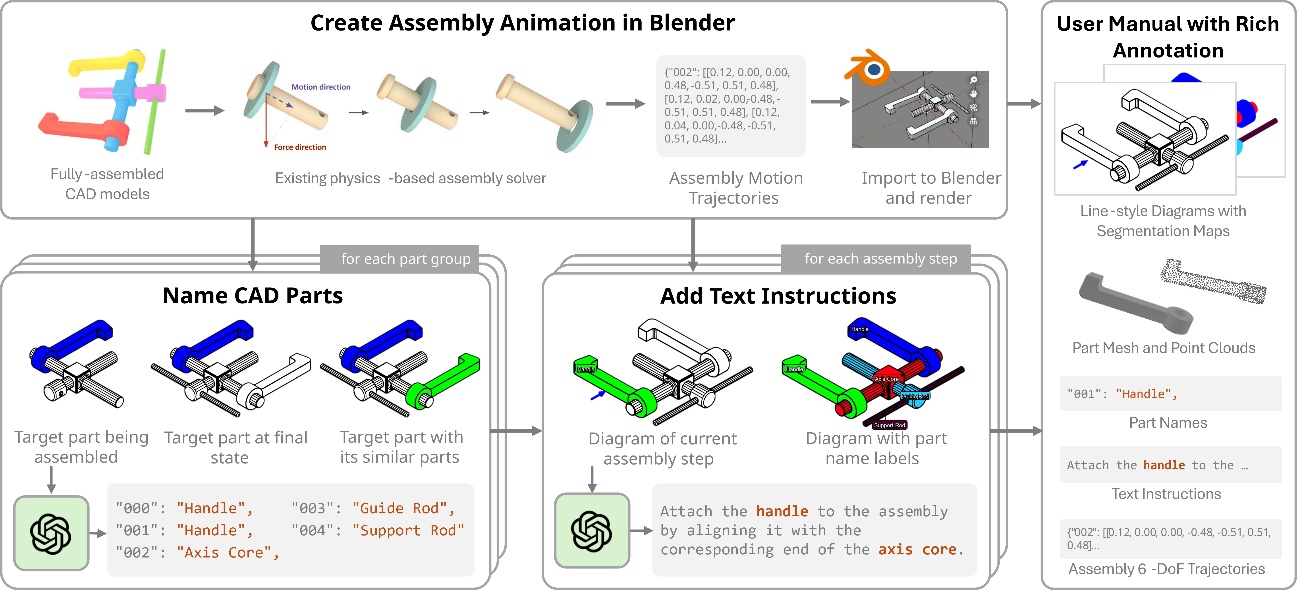

The dataset is created by our automated instruction manual generation pipeline. From a CAD model of an assembled object, we find the part assembly trajectories using a physics engine, then import the animations to Blender. These Blender renderings are used to prompt a Large Vision-language Model to create CAD part names and text-based assembly instructions.

We provide diverse assemblies where parts vary widely in shape, size, and geometry, and each assembly follows its own unique motion trajectory.

AssemblyDyno: A Holistic Assembly Process Prediction Framework

To tackle the challenges posed by AssemblyBench, we introduce AssemblyDyno, a new transformer-based architecture designed to predict both the correct assembly order and the full 6 degree-of-freedom motion trajectories of every part. AssemblyDyno learns to align the stepwise instructions with the corresponding 3D point-cloud parts using a powerful soft-attention mechanism. This allows the model to understand which component each instruction refers to, and how that component must move, both in translation and rotation, to complete its assembly. For every part, AssemblyDyno predicts a discrete sequence of physically meaningful transformations, effectively charting the entire motion path needed for successful assembly.

Compared to the previous state-of-the-art method, AssemblyDyno generates motion trajectories that are far more realistic,closely matching the true assembly process and capturing subtle motions that previous approaches miss.

Simulator-Based Evaluation in AssemblyBench

Here we show some scenarios that illustrate why a physics-engine-based evaluation is important. As is clear, some of the predictions by our AssemblyDyno model, while plausible when considering 3D point clouds, are clearly infeasible when physics is enabled. Thus, using this simulator provides a stricter evaluation of the quality of the predicted assemblies.

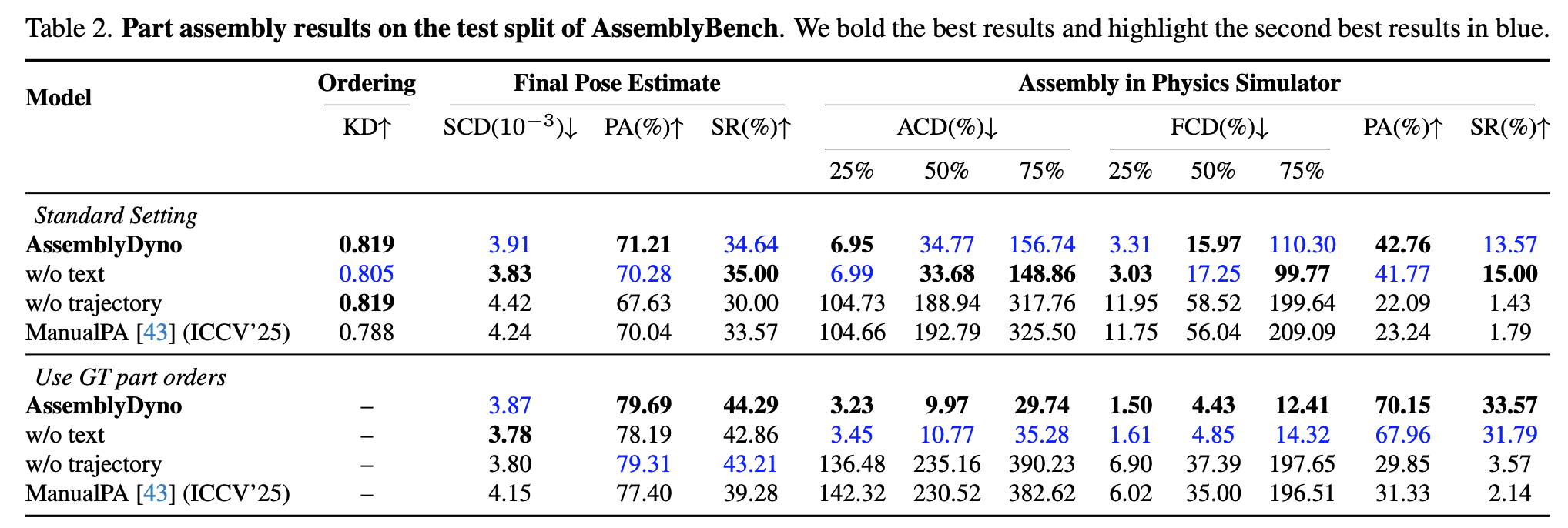

We compare state-of-the-art methods on the AssemblyBench dataset on a variety of settings, including comparisons on predicting the part order, the final assembled poses of parts, and the assembly trajectories. Our evaluation also uses a physics engine, as shown on the right side of the results table.