TR2026-029

MMHOI: Modeling Complex 3D Multi-Human Multi-Object Interactions

-

- , "MMHOI: Modeling Complex 3D Multi-Human Multi-Object Interactions", IEEE Winter Conference on Applications of Computer Vision (WACV), March 2026, pp. 1512-1521.BibTeX TR2026-029 PDF Video Data

- @inproceedings{Kogashi2026mar,

- author = {Kogashi, Kaen and Cherian, Anoop and Kuo, Meng-Yu Jennifer},

- title = {{MMHOI: Modeling Complex 3D Multi-Human Multi-Object Interactions}},

- booktitle = {IEEE Winter Conference on Applications of Computer Vision (WACV)},

- year = 2026,

- pages = {1512--1521},

- month = mar,

- url = {https://www.merl.com/publications/TR2026-029}

- }

- , "MMHOI: Modeling Complex 3D Multi-Human Multi-Object Interactions", IEEE Winter Conference on Applications of Computer Vision (WACV), March 2026, pp. 1512-1521.

-

MERL Contacts:

-

Research Areas:

Abstract:

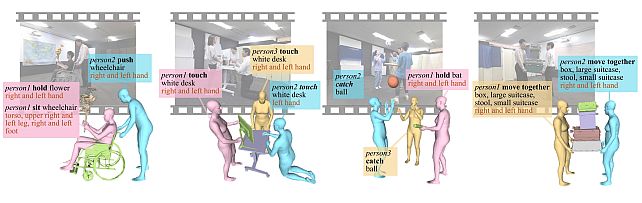

Real-world scenes often feature multiple humans interacting with multiple objects in ways that are causal, goal-oriented, or cooperative. Yet existing 3D human- object interaction (HOI) benchmarks consider only a fraction of these complex interactions. To close this gap, we present MMHOI – a large-scale, Multi-human Multi-object Interaction dataset consisting of images from 12 everyday scenarios. MMHOI offers complete 3D shape and pose an- notations for every person and object, along with labels for 78 action categories and 14 interaction-specific body parts, providing a comprehensive testbed for next-generation HOI research. Building on MMHOI, we present MMHOI-Net, an end- to-end transformer-based neural network for jointly estimating human–object 3D geometries, their interactions, and associated actions. A key innovation in our frame- work is a structured dual-patch representation for model- ing objects and their interactions, combined with action recognition to enhance the interaction prediction. Experiments on MMHOI and the recently proposed CORE4D datasets demonstrate that our approach achieves state-of- the-art performance in multi-HOI modeling, excelling in both accuracy and reconstruction quality. The MMHOI dataset is available at https://zenodo.org/records/17711786.